In digital communication, data is represented as a binary bitstream of 0s and 1s. In practice, real-world data is rarely truly random — it often contains repetitive patterns, especially long runs of identical bits (e.g., ...000000... or ...111111...). At low speeds this causes no real harm, but at 1 Gbps and beyond, such patterns create critical signal integrity problems. At 1 Gbps, one bit period is just 1 nanosecond, so a run of tens or hundreds of identical bits means the signal voltage stays constant for a relatively long time.

Problems Caused by Non-Random Data Patterns

Non-random patterns create two major problems in high-speed links.

1. Loss of signal transitions — When a long run of identical bits occurs, there are no voltage edges in the signal.

2. Spectral peaking — The power spectral density (PSD) becomes concentrated at specific frequencies, creating sharp spikes in the frequency domain.

These two effects cascade into receiver performance degradation. Absent transitions starve the Clock and Data Recovery (CDR) circuit of timing references, causing it to lose synchronization. Spectral concentration increases intermodulation with adjacent channels and boosts electromagnetic interference (EMI), making it harder to comply with regulatory standards such as FCC limits.

CDR Starvation

In multi-gigabit serial links, no dedicated clock line is sent alongside the data. The receiver must extract clock information directly from the incoming data stream by detecting voltage transitions — a process performed by the CDR circuit. The CDR samples the rising and falling edges of the data to reconstruct a recovered clock, which is then used to latch each data bit at the correct moment.

When long runs of identical bits cause transitions to disappear, the CDR loses its timing reference. This leads to clock instability (timing jitter), prevents the recovered clock from staying synchronized with the data, and ultimately increases the Bit Error Rate (BER). Ensuring adequate transition density in the data stream is therefore a fundamental requirement of any multi-gigabit serial system. A scrambler addresses this directly — regardless of the input data pattern, it introduces transitions to help the CDR maintain a stable lock.

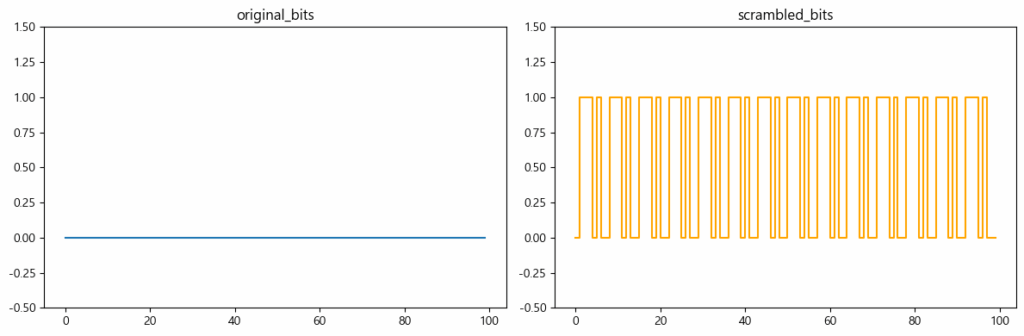

Original bitstream vs. scrambled bitstream — note the increased transition density after scrambling.

Original bitstream vs. scrambled bitstream — note the increased transition density after scrambling.

Power Spectral Density and EMI

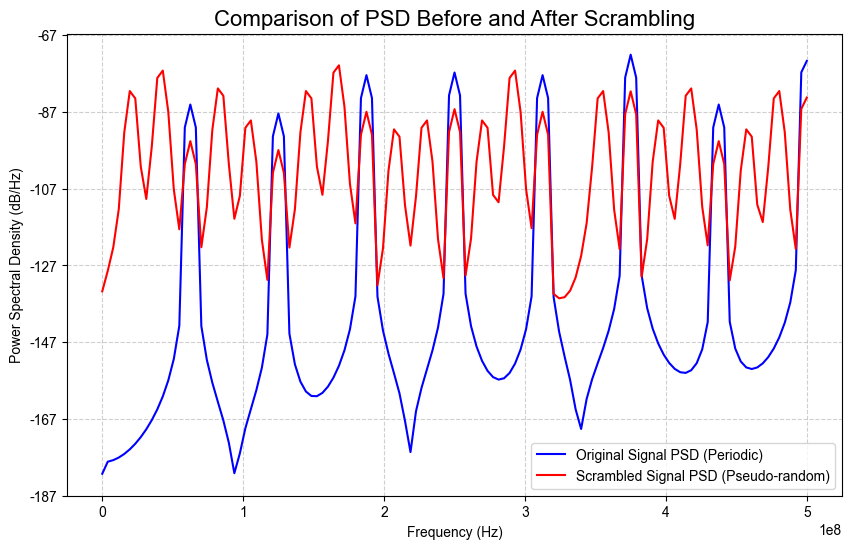

Power Spectral Density (PSD) describes how signal power is distributed across frequencies. Repetitive or periodic data patterns concentrate signal energy at specific frequency bands, forming sharp spectral peaks.

These peaks have two serious consequences:

- They can violate regulatory emission limits (e.g., FCC Part 15).

- The concentrated energy causes electromagnetic radiation that interferes with adjacent communication channels, leading to crosstalk.

A scrambler statistically randomises the input data so that signal power spreads evenly across a wide frequency band. This flattens the PSD, eliminates spectral peaks, reduces EMI, and helps the system meet regulatory requirements.

Scrambling as Statistical Whitening

A scrambler is not a security device — it does not make data unreadable. Its purpose is to impose useful engineering properties on the transmitted bitstream. The process is often called statistical whitening: the data stream is mathematically transformed so that it appears statistically random (pseudo-random), even if the original data is highly repetitive.

The most common implementation uses a Linear Feedback Shift Register (LFSR) to generate a Pseudo-Random Binary Sequence (PRBS). The PRBS is XOR’d (modulo-2 added) with the original data stream:

scrambled[n] = data[n] XOR prbs[n]

At the receiver, a descrambler applies the same PRBS with the same XOR operation to perfectly recover the original data:

recovered[n] = scrambled[n] XOR prbs[n] = data[n]

Because the LFSR generates a deterministic sequence, the transmitter and receiver stay synchronized without any extra overhead.

Scrambling vs. Line Coding

As high-speed standards have evolved, the relationship between scrambling and line coding has changed significantly.

Older standards (Gen1, Gen2): 8B/10B encoding maps every 8-bit data byte to a 10-bit symbol, guaranteeing sufficient transitions, DC balance, and bounded run length. Signal integrity is built into the code itself.

The cost: 8B/10B imposes a 25% bandwidth overhead — for every 8 bits of payload, 10 bits must be transmitted. At speeds above 1 Gbps, this overhead significantly reduces effective throughput.

Modern standards (PCIe Gen3+, USB 3.x, Ethernet 25G+): 8B/10B is replaced by a combination of scrambling and 128B/130B encoding. The overhead drops to just 2/130 ≈ 1.56%, while the scrambler ensures the statistical randomness that 8B/10B achieved through explicit line coding.

In summary: at multi-gigabit speeds, bandwidth efficiency is paramount, and a scrambler with near-zero overhead has become the primary mechanism for guaranteeing signal randomness.

Summary

| Concern | Without Scrambling | With Scrambling |

|---|---|---|

| CDR lock | May lose lock on long runs | Stable — transitions always present |

| EMI / spectral peaks | Problematic spectral spikes | Flat PSD, EMI reduced |

| Bandwidth overhead | 0% (but line coding adds 25%) | ~0% (paired with 128B/130B, ~1.56%) |

| Implementation | N/A | Self-synchronous LFSR, minimal logic |